I've purchased a second hand Pixel

6a, codename: bluejay.

I am looking at running LineageOS on it, perhaps not immediately though. Here are the builds. Here's the install guide.

First I needed to unlock the bootloader. Here's what I needed to do:

- Activate developer mode by tapping the build number seven times.

- Enable USB debugging; the device will ask to trust your computer.

- Now, the developer options will display an option "OEM unlocking".

The 'OEM unlocking' option controls whether the bootloader is locked. This

determines whether you can flash untrusted software onto the device using the

fastboot tool. Boot into the bootloader using adb -b reboot bootloader.

I started up my device without connecting to any networks. When I started it, the 'OEM unlocking' option was greyed out. I read around the issue, and it seemed that there was a chance I had received a secondhand Verizon handset. Secondhand Verizon handsets are permanently OEM locked, which means you must always run stock firmware on them. However, in reality my handset was not one of these. A phone with an unlockable bootloader is referred to as 'factory unlocked' (contra 'carrier unlocked'). My handset had been sold as 'factory unlocked', so should be fine.

In fact, all I had to do was to allow the handset to update as much as possible, ensuring I had the latest security updates for Android 16. Then, factory reset the device. Having done this, the 'OEM unlocking' option un-greyed itself after re-setting up and enabling developer mode. So it seems that it may require not only a reset, but also a complete update.

In the autumn of 2025 I read André Gide's memoir, 'If It Die' (Si le grain ne meurt). The title, which may seem strange to some, comes from a Bible text:

Except a corn of wheat fall into the ground and die, it abideth alone: but if it die, it bringeth forth much fruit.

I cannot recall at present whether the verse is referred to, directly or obliquely, in the text itself. It's clear that there is something of the 'death-and-rebirth' within the text, particularly Gide's relationship to his personal bodily excess.

The point here is to bring a list of some of the key real-life locations which are present in this memoir, and for the purposes of brevity I keep it only to the first part of the book (thus excluding the travels in North Africa).

Paris -- Gide was born in the Rue de Médecis, (sixième arrondissement). The Jardin du Luxembourg plays a big part in his childhood. He will return numerous times throughout the text. Later moves to the Rue de Commaille, septième arrondissement.

La Roque-Baignard -- the department of Calvados, Normandy, northwestern France. The link is on Gide's mother's die, Juliette Rondeaux.

Rouen the large city and capital of Normandy, and the link is to the mother.

Uzès a commune in Southern France, where his father from a poorer background is linked to. Nîmes itself is also an important location.

O little town of Uzès! if you were in Umbria, how the tourists would flock from Paris to visit you!

Cuverville -- Gide's uncle's place. This is where the opening to La porte étroite also takes place, with the 'dark walk'.

Hyères -- a holiday location where they spend the winter. Costebelle is a subdivision of the town.

Lérins -- visited, while also visiting Cannes, and marvels at the rock-pools.

Trip to Brittany -- we know at least that Gide visited the following locations: Le Pouldu, Quiberon, Quimper.

Pierrefonds -- took temporary lodgings here.

Annecy and Menthon -- important location where 'Cahiers of Andre Walter' was written.

Lotzwil is where the Gide's parents' Swiss maid, Marie, eventually went to live.

For more information consult the book André Gide, A Life in the Present by Alan Sheridan.

I've spent a couple of weeks in Morocco. Here's a small travel guide with a few of my observations.

Currency & Money Usage

Moroccan Dirhams, MAD, is the currency. It's essentially pegged to the Euro at a 10:1 exchange rate (100 dirhams is about 1 euro; not 100% accurate but probably all you need to know). You can't get it before you go. You have to get dirhams at the Morocco airport, either that or attempt to use Euros until you can access an exchange, of which there are many. You can use ATMs at the airport, but be aware there will likely be a large queue.

When using ATMs, there doesn't seem to be a way to avoid getting charged a fixed fee for the use of the international ATM. All banks that I could see charge this fee. Likely you know this already, but I recommend using a travel-focused credit card to avoid currency conversions, and always select the MAD value when using an ATM.

In general, all transactions are in cash, card payments are not a thing. However, haggling seems to be restricted to certain areas, e.g. souks when buying potentially higher-value items. I don't think it's appropriate to haggle e.g. for prepared food.

The discussions of tipping seem to divide people very much. Most sources seem to say "it's customary", however it's unclear whether Moroccans actually tip. Rounding the bill to the nearest value seems to be the most common way to do it, alternatively keep change around. In my opinion you should never tip more than 10%. The minimum you can tip is 2DH, otherwise just don't bother.

The ATMs will mostly dispense 100DH and 200DH notes. 200DH notes are a pain to deal with, because they're too large. However probably the majority of shops will be ok with you offering large notes, they are likely to have change unless they have only just opened. If they don't, just move to the next one.

Toilet attendants are a thing in Morocco and they will want to charge you 2DH for the privilege of spending a penny. This can be problematic as you may not have the change in which case leave whatever you have, but try to make sure you always have some 1DH coins on your person.

Rough cost guide

This is what I was paying but I might have been being ripped off.

- Coffee: between 5 and 20 DH. See note on Coffee

- Water: <10DH in a shop.

- Street food sold by piece e.g. savory fritters, 5DH a piece.

- Snack bar food: 10 to 40DH.

- Fancier restaurant: up to 80DH for a single main, with menus/formulas up to

- 120DH. For 60-80DH you should be getting something a properly filling meal.

- Full grand taxi journey 30 minutes: 10DH

- Full grand taxi journey 1 hour: 35DH

- Petit taxi: 20/30DH for like 20 minutes.

- Train Rabat to Marrakesh: 200DH

- Intra city CTM / Supratours buses tend to be around 100DH - 200DH.

- Hotel laundry service: 5DH.

In general, nothing should ever be more expensive than Europe, but at the same time it's not right to assume that absolutely everything will be significantly cheaper than Europe.

Water

The tap water is chlorinated. I never got ill from it but I only used it for toothbrushing and maybe some icecubes. I found the flavour unpleasant though. Buying water is quite a pain, if I stayed longer I would likely start drinking the tap water. If you're moving a lot then it's impractical to carry around large bottles of tap water, but at the same time it sucks having to continually monitor and ration your water use.

Language

Moroccan Arabic is the main language. French is used on most but not all signage. Most people speak at least some French but you shouldn't assume people are fluent in French. In cities, many young people speak good English, but in general your default assumption should be that people don't speak any English. It'll be useful for you to at least learn basic phrases in Moroccan Arabic, hello and thank you, consult a guide book for that. I used French for all numeric discussion.

Rs are always trilled and people frequently won't understand you if you speak with a non-trilled R.

Alcohol

Most places aren't licenced to sell alcohol. Lots of places serve mocktails, I had a delicious virgin mojito. You can get Casablanca beer on draught in tourist-focused pubs.

Travel

There are lots of ways to travel.

Local buses are cheap but poorly signposted, stops just seem to exist in people's heads largely, and people flag down the buses when they want to get on. There are a few labelled arrêt on the road, if you see people waiting on the pavement for no obvious reason there's a fair chance they are waiting for a bus.

Petit taxis are cheap but bear in mind that you will likely be overcharged as a tourist unless you haggle. You might also end up sharing a taxi because the driver will pick up people on the road who are going the same route, this is totally normal and in this case every passenger pays the full rate of their journey. Meters (compteur) exist and you should try to get drivers to use them, but in tourist areas drivers sometimes refuse your business rather than use the meter.

Grand taxis are an excellent system whereby people share rides in large cabs. These can drive outside of city limits. They are an excellent means of getting from city to city where the hop is relatively small. For long journeys they might not be that comfortable, though. There's a fixed set of routes. Travel books should contain lists of grand taxi routes that are close-enough to being accurate, but as a general rule you'll always be able to get grand taxis to geographically-near settlements, and even some that are quite far away. To get one you walk up to the terminal and ask someone! It's not always that clear who to ask, but sometimes people will be shouting out destinations, and they will point you in the right direction for your destination. Get in and wait for the ride to start, it'll start when you have 6 people. There doesn't seem to be any obvious protocol around where to sit so just sit where you want. You'll be charged for the ride BEFORE the ride starts and it should be quite cheap. In my limited experience, people don't talk too much during the ride itself, but they'll often chat a bit while waiting for it to fill up.

Coaches are run by Supratours and CTM. Supratours is a branch of ONCF, who are the public train operator patterned after the French one. There's not a lot to choose between these two companies. They both run comfortable and reliable services. However the coaches do not have wifi or toilets. The latter is quite tricky, for long trips the Supratours coaches will make rest stops so that you can use the toilet. You may get just 1 rest stop for the entire journey though (this is what I experienced on the night bus Marrakech -> Fes). When you book a ticket you get given a seat allocation (place) which you must sit in, unless there's very few people on the bus in which case you may be able to just sit wherever you like. All people will be trying to find their allocated seat. The problem with coaches is that they often stop very far outside of town -- in this case you'll need to figure out internal transport from the bus depot to the city centre. Sometimes they'll come into the central gare routière or sometimes they will have their own stop. Other passengers will board the coach mid journey from offices which are run by the company. Buses have air conditioning to cool down, but they don't have anything to heat up -- this not being a problem most of the time. However, in the cold regions like the Middle Atlas in the early morning and night, it can actually get quite cold, so bring at least a jumper and ideally more if this is your situation.

Trains are fast and comfortable but can be busy. They seem to be quite privatised like in the UK which means the exact arrangement is a mixed bag: sometimes you might be in a traditional-style compartment facing other people. Again, unlike the UK, booking a ticket gives you a fixed place on the train, which you must sit in. There are distinct 1st and 2nd class sections of the train. Make sure you sit in the right carriage (voiture) and seat (place). They do check tickets, an inspector comes around and scans a QR code on the ticket. There are no boards or announcements showing the calling destinations, but every stop has a board saying e.g. "GARE DE MEKNES" so you need to look out for your stop. The platforms (voie) themselves do have electronic boards showing calling destinations and departure time in Arabic and French.

Morocco is a papers-please society. When you're doing inter-town travel, always carry a copy of your passport.

Weather

I arrived in mid November, this was a good time to arrive. I'd say that once it reached December, it was starting to get too cold. The optimal way would probably be to do your trip spanning November, perhaps the start of November. The sun does make up for it, because even when the temperature is quite cold, the brightness of the sun still heats you up (and can burn you, although it's not actually too bad in November.) The mornings can be very cold. Still don't overplay this: most of the day it's sunny and warm.

Coffee

Coffee is very widely served in Morocco and is usually made in espresso machines. However there are lots of different things that pass for coffee. In general they follow the French system. If you ask for cafe noir you'll get a small ('short') drink. You can try asking for a cafe americain but what you get for this is very mixed. Most drinks will still be quite short and strong compared to the UK, I had to accept that to a large extent I simply wasn't going to be able to get a large heavily diluted Americano like you can get in the UK. It's common to add sugar to coffee and in the case of the cafe noir it's perhaps not a bad idea as it mitigates some of the bitterness. You can stop throwing stones at me now...

Tea

Atay, thé minthe, laughingly called Moroccan whisky, is something you should definitely encounter. The barman will give you the teapot and some mint leaves, put the mint leaves into the pot, pour it out, then repour the glass back into the pot, repeat the process 3 times. Seems to be traditionally drunk with sugar cubes. Overall, this is a pretty great drink and I understand why Moroccans like it.

Food

There is a fuzzy distinction between a restaurant-proper, and a takeaway-style venue. You can normally sit down to eat even at the takeaway-type venues which label themselves as 'snack bars', though they do serve full meals, not only snacks. Not all eating-establishments will have menus, if they have a menu it might only be in Arabic or it might be in French. Just because a venue displays graphics or names of a dish doesn't mean that it necessarily serves that dish! If you sit down you normally pay after eating. You can ask for food 'à emporter' to get it wrapped, nearly everyone will do this.

There's also proper 'street food' where you just buy single pre-made pieces from the stall, you can expect to pay 5DH or less for them.

In snack bars and restaurants, portion sizes tend to be large (apparently this is a Mediterranean thing) but this is not 100% guaranteed. Don't order a full menu / formula without being pretty hungry.

Many dishes are served with frites even when you might not expect them to be. The frites are often undersalted, unfortunately.

Layout

Nearly all towns have at least two distinct parts, a Medina and a Ville Nouvelle. Medinas are car-free and often quite hard to navigate as GPS doesn't always work in narrow streets. In some places the Medina can function as a completely self-contained area, in other places the Medina is more of an add-on and the Ville Nouvelle is much larger.

Accommodation

Some hotels will want to be paid in cash, others will accept cards as in booking.com.

The standard of accommodation is rather low, but the value is still quite good. In the UK and Western Europe, the minimum you can pay for a place to stay is about £50 a night (~60MAD), which gets you a decent level of comfort. In Morocco you can pay a lot less than this, it's possible to pay more like £20 a night, but your level of comfort will be correspondingly lower. So for instance, accommodation is often deficient in these areas:

- Access to a chair and table

- Lighting beyond a main light

- Placement of AC outlets

- Consistent hot water in the shower

- A/C (or if they have A/C the heating mode doesn't work)

- Reliable wi-fi

- Cleanliness

- Toilet roll

- Staff speaking English

- Availability of hot drinks

- Availability of bottled water

- Having a window

- Soundproofing

- Staff presence

- Access control (keys being broken or weird protocol around them)

Pretty much all establishments serve breakfast which is a nice opportunity both to taste the various things on offer and also to interact with the staff and other guests. Breakfast is called ftour. Most places will give you some or all of the following:

- a fried egg, or occasionally a hard boiled egg

- jam

- marmalade

- amlou

- msemen (guaranteed)

- baguette

- jben (white cheese)

- butter

- Baghrir -- semolina pancake (sometimes)

- honey

- cake of some sort

- coffee

- orange juice

- fruit salad

- olives (likely Beldi)

Breakfast tends to be very sweet so almost every one had some sort of cake, normally a sponge cake. Msemen is also always present. Breakfast can run from any time 7 to maybe 10-11, but don't expect it to be on-the-dot whatever time you choose. (There's generally a much looser attitude taken towards time in Morocco.)

Miscellaneous

If you're coming from the UK you'll need an UK->EU adapter.

The country code is +212.

Roof terraces are a big thing in Morocco.

I have recently upgraded several systems to Trixie, this covers 2 servers and 1 desktop.

The servers are managed by Puppet (which has notably forked into OpenVox recently).

The actual upgrades went without errors. I have a script that I use for these upgrades now and they have gone without a hitch. I also am starting to gather a runbook for pre-operations, of which the main points are:

- Backup all PostgreSQL databases.

- Upgrade the kernel and reboot.

- Remove all external non-distribution packages.

Some of this (but not all) is automated with the script.

The biggest issue I saw was regarding the legacy

facts:

Legacy facts no longer collected or sent to Puppet Server. This initially

blocked everything, as the manifests used old syntax, so they wouldn't even

evaluate. I found a

setting

is useful, include_legacy_facts. I used this to get a toehold on the problem,

but I ended up reverting it once I fixed all the manifests.

This change looks something like this:

class main::fstab {

+ $my_hostname = $facts['networking']['hostname']

+

file { '/etc/fstab':

- source => "puppet:///modules/main/fstab/${hostname}.cf",

+ source => "puppet:///modules/main/fstab/${my_hostname}.cf",

Previously $hostname was provided in every scope as a global. Under the new

arrangement, only $facts is global, and everything else must be accessed as an

element of this hash.

I mentioned PostgreSQL before: currently my approach is to backup all databases, completely purge postgresql, and start afresh with the new version (modifying the version number in my manifests). This seems to be more robust and skips upgrade logic in the Debian packages. I am likely missing something, but this approach has worked for me.

last was removed, I don't care about this but as I'm something of a stickler

for Unix tradition I have installed wtmpdb globally in an attempt to preserve

this.

There are a few syntax changes in the newer

puppetlabs-postgresql

version. Notably, postgresql_password needs to be qualified with

postgresql:: prefix now.

The non-free-firmware apt repository is required on my laptop, a Thinkpad

T450, otherwise the wifi does not function, frustratingly.

On first install to the T450, grub does not show the graphical menu. I've had

to put in a weird hack to enable the grub menu to show properly. I found the

hack in a bug

report. This is

rather bad, luckily the fix is simple, it involves modifying the 00_header

file.

It's been reported 3 times:

- grub menu not shown on boot after upgrade to 2.12~rc1-9

- GRUB Screen Invisible (Black Screen)

- GRUB v2.12-7 doesn't show menu in graphical mode

There was a change in the syntax used for WSGI configurations in

puppetlabs-apache. I couldn't find much clear documentation for this, but

the diff looks something like:

- wsgi_daemon_process => 'gebunden',

- wsgi_process_group => 'gebunden',

-

- wsgi_daemon_process_options => {

- home => $backend_root,

- python-path => $backend_root

+ wsgi_daemon_process => {

+ 'gebunden' => {

+ home => $backend_root,

+ python-path => $backend_root

+ }

},

+ wsgi_process_group => 'gebunden'

Note that the python-path is now embedded into the overall

wsgi_daemon_process parameter.

I have removed support for some services that are no longer used.

telegram-desktop

telegram-desktop was removed from Trixie. I attempted to backport it, but faced a few obstacles. First, parts of the build dependencies also needed to be backported; second, being complex C++ code, they have long build processes that require a lot of memory. I eventually managed to do so, but only thanks to this intrepid bug reporter who has run into the same issue as me.

tt-rss

tt-rss was removed from Debian for trixie, but it has been re-uploaded to Sid.

In trixie there are issues with the php-php-gettext dependency. However I was

able to just rebuild the sid packages on trixie, effectively backporting them to

trixie. You need both of these packages. This version of tt-rss dates back to

2021 and uses some old code. As such, there are still a large amount of

warnings from uses of various deprecated PHP features; it's likely this

backporting strategy won't be able to continue forever, but for now tt-rss

continues to work.

virtualbox

Virtualbox has been removed from trixie. There are new upstream virtualbox packages available for trixie, though. I used 7.2_7.2.0-170228.

However, there is a strange issue which manifests in no VMs being able to

load, failing with a

"Guru Meditation" error. Background info

here. The kernel now always

loads KVM, which conflicts with Virtualbox; so the eventual solution was to

blacklist kvm and kvm_intel. This may have to be revisited at some point in

the future.

urxvt-unicode

I ran into this rather odd visual bug, which seems to be specific to xmonad.

Applied the fix lines-rewrap.patch, mentioned in that bug.

Backported package: rxvt-unicode

I've finally retired GitLab, as I found it was too heavyweight, I don't use most of the features, had a huge attack surface and the upgrade process was labrynthine.

There was a change to the default behaviour of /tmp to make it use a

ramdisk.

I'm OK with the default behaviour here for now, as I am not really hitting RAM

pressure on any of these machines.

I want to give a shoutout to anarcat for their excellent Trixie upgrade notes.

In K&R, we have an exercise 2-1. It's stated like this

Write a program to determine the ranges of char, short, int, and long variables, both signed and unsigned, by printing appropriate values from standard headers and by direct computation. Harder if you compute them: determine the ranges of the various floating-point types.

This exercise is only part of K&R 2nd edition, which uses C89 (ANSI C).

This exercise forced me to learn a fair bit about the representation of floating point numbers. Really, I should know this stuff already, but the last time that I learned it was when I was probably under 18 years old -- more than 20 years ago; and back then, I didn't enough knowledge to understand it. At the time, it was taught to me in a very first-principles way. I don't say this is the wrong way to teach, but at that time it didn't work for me.

I actually got this information from a fun visualization on YouTube, so there are a few remaining perks to living in 2025.

There are 3 main components to floating point representation, which are all

sub-components of a single type float. These are standardized under IEEE 754.

- A sign bit (size 1).

- An exponent (size 8).

- A mantissa (size 23).

To 'decode' a number, you essentially multiply out all the components, according to a specific formula.

SIGN * MANTISSA * EXPONENT

The strategy is basically analogous to scientific notation. Each part needs to

be decoded separately. The mantissa essentially encodes a repeatedly smaller

fractional value, implicitly starting with 1., getting closer and closer to 2

at its maximum value. E.g. if there was a mantissa with a size of 1, it could

either encode 1 or 1.5. If the mantissa had size 2, it could have values 1,

1.5, or 1.75.

The exponent is encoded as a binary integer, but with a few quirks. A 'bias' is

used instead of a sign bit. For instance, imagine an exponent of size 8. This

exponent would have 256 possible values (0-255). However a bias of 127 is used.

So subtracting the bias (255 - 127), we get 128 as the highest value. However

we know (from reading the spec... right?) that the top exponent value is

reserved to represent infinity. So the actual maximum is therefore 127. By

the same token, the lowest value is also reserved to represent NaN. So the

lowest value is therefore 0 - 127 + 1 = -126.

Apparently a side effect of this strategy is that precision gets worse as you approach the end of the range, but precision is better within the lower values. This does seem like a reasonable trade off for me. However I do intuitively feel that fixed-point seems like a better approach for most problems that I actually encounter in computing.

Note that the exercise has a big problem which is that the details of floating

point are actually not defined in C89. This is where language-lawyering the

wording of the question gets a bit murky -- from the most abstract perspective,

a float is just a method of storing a real number. You can't just impute a

mantissa/exponent structure to it. However, perhaps the intended reading is for

the word float itself to correspond directly to said representation, which is

assumed-background-knowledge for the reader? We can't read K&R's minds, unfortunately.

There are several things to note: using these facts about the representation

requires the ability to compute powers. Luckily, K&R have already introduced a

power routine, so we just need to rewrite this to work on floats. However, the

journeyman programmer might wonder, won't this suffer from the notorious

precision issues? Luckily, because multiplying by 2 operates directly on the

exponent and doesn't touch the mantissa at all, it's always precise. This means

that you can compute everything using the type in question (float or

double).

The other thing to note is that while FLT_MAX is a valid comparison here,

FLT_MIN is actually not that relevant. At least colloquially, when we talk

about the range of a value, we mean its full range from the maximally-negative

to the maximally-positive. So, strictly the endpoints would be -inf and

+inf. However FLT_MIN is not actually the maximally-negative value; that

would be -FLT_MAX.

The solution to the exercise that I eventually settled on uses a direct encoding of the representation rules for IEEE 754 floats: ex2-1.c.

However my intuitive sense of the intended solution to this problem suggests that it shouldn't rely on any knowledge of the internal structure. The closest that I could get to this was patterned on a suggestion from a Reddit user.

#include <float.h>

#include <stdio.h>

float get_stage1_max(void) {

float f = 1;

float prev;

while ((f * 2) != f) {

prev = f;

f *= 2;

}

return prev;

}

float calculate_by_approach(float start_point) {

float increment = start_point;

float f = start_point;

float prev = f;

while (1) {

increment /= 2;

if ((f + increment) == f)

break;

prev = f;

f += increment;

}

return prev;

}

int main(void) {

float result = calculate_by_approach(get_stage1_max());

printf("Stage 2 max: %g\n", result);

if (result == FLT_MAX) {

printf("This is the largest float.\n");

} else {

printf("This is not the largest float.\n");

}

}

This calculates the value in 2 stages: first it will calculate the largest float value that's reachable by doubling. Then it will repeatedly add smaller values, dividing by 2 until infinity is reached, and give the previous value before that happens. Realistically this does rely on a certain amount of assumptions about the behaviour of float arithmetic and therefore (by implication) of the internal structure of a float, but I believe that this is the closest one can get to a fully 'abstract' solution; happy to be corrected, though.

This exercise really illustrates a characteristic of K&R: the fact that they can forcibly-entail so much complexity from just this tiny statement, just an appendage to an exercise.

I've visted Cambridge recently and visited some nice pubs. The Blue Moon: funky, vibey place with a nice beer garden. The Cambridge Blue: lovely bar staff, absolutely rammed in the beer garden when I went there, has a very nice atmosphere though, and a huge bottle shop. The Devonshire Arms: loved this place, a kind of slightly anarcho/left vibe in the clientele, ramshackle in design and with a nice, open layout, super friendly bar staff.

Also visited The Baron of Beef, notorious for its fight between Clive Sinclair and the Acorn guy in Micro Men, but I'm almost 100% sure that the scene wasn't actually filmed there, the outside is pictured in the film though. The Maypole was also a generally pleasant affair.

I have a short gap in my reading; here are some options:

- Kafka diaries

- Kristeva - Revolution in Poetic Language

- The Politics of Time

- Richard Norris - Strange Things are Happening

- Simon Reynolds latest

- Emma Dowland - Care Crisis

- Abraham & Torok - Shell & the Kernel

- Milan Kundera (Misc)

- David Graeber - Debt

- Tomorrow and Tomorrow

- E. Rashkin - Psychoanalysis of Narrative

- Ferenczi - Thalassa

- Michel Foucault - History of Sexuality Vol 1

- Leader - What is Madness

I am reading (well, have now finished) L'etranger by Albert Camus. It's made significantly easier by the fact that I can look words up on my ereader. However, the ereader doesn't allow looking up verb conjugations, meaning that automatic lookups are mostly limited to the infinitive form. As the infinitive doesn't occur very often, this means that lots of manual lookups happen.

Most of the sentences seem to be written in the 'passé composé' with the verb 'avoir'. eg j'ai mangé = I ate. I initially mentally translate this as the English present perfect -- "I have eaten". I then need to mentally reshape it into the English simple past "I ate".

Some of the hardest parts:

Words meaning when -- alors, quand, lorsque. I don't have a clue what the rule is for these if there is any, and they all seem to have a bunch of different other meanings.

Words meaning 'still' -- toujours, encore, quand meme. I don't get the rule for this.

The word plus -- Such a simple word and seemingly with only one concrete meaning yet it's deployed in thousands of different shades of meaning, and a surprising number of the usages are not particularly obvious. I think I basically never recognize the negative variation ne...plus, "not any more".

Irregular past participles -- I basically have to remember all of these because I don't write them down. Luckily there aren't that many of them, but some of them are a pain -- particularly the irregular verbs with voir endings have past participles that often seem bizarre.

Conjugations of être -- The fact that être conjugates into forms that start with s- and forms that start with e- is a never-ending source of bewilderment. It helps to remember that the verb is formed from mashing together the Latin verbs forms esse and stare. The future tense of être is particularly confusing.

Various meanings for sentence with on as the subject -- I haven't quite grasped how to deal with this because it seems like there are maybe 3 different forms of this? One is the "us" meaning, one is an impersonal pronoun (like English 'one'), and I feel like there's one other form. Having reread about this now, the confusion makes sense, as we don't have quite such an ambiguous pronoun in English.

The ne...que construction meaning only -- this normally causes a big double take, if I can even recognize it at all, and I misread all of these sentences until I learned about it.

Multi-word phrases (idioms) are very difficult. These are used all over the place and they can't be looked up in the dictionary. There's really no choice other than rote memorization of these.

I created an Anki deck from all of the vocabulary that I wrote down, this has every card tagged with parts of speech, and with limited English translations based on the usage in L'etranger.

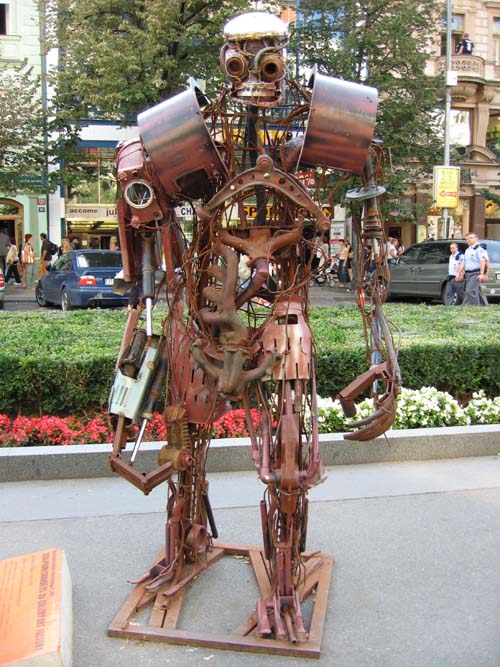

Stepan Tesar, Czech Republic - "Robot" 2.4x1x1 meters, iron, welded scrap. Robot machine is presented as human friend - helper or visitor, who wanders through the Wenceslas Square. The sculpture tries to bridge the barriers between people and artificial intelligence and integrate such 'modern slaves' in the society.

Photo by Courtney Powell

[!meta title="Obholzer"]]

Obholzer's book has some interesting aspects to it.

This blog is powered by coffee and ikiwiki.